When you purchase through links on our site, we may earn an affiliate commission. This doesn’t affect our editorial independence.

When OpenAI Chief Executive Officer Sam Altman publicly acknowledged that the company’s newly secured agreement with the United States Department of Defense was “definitely rushed” and that “the optics don’t look good”, he was, in effect, admitting what many observers had already begun to suspect. For the record, the OpenAI DoD deal was struck in rapid succession after negotiations between rival AI firm Anthropic and the Pentagon collapsed; hence, raised immediate and pointed questions about OpenAI’s commitment to the ethical guardrails it had long championed. More broadly, it forced a reckoning within the artificial intelligence industry over what it means to draw a “red line” when national security contracts and geopolitical pressure enter the equation.

Read Also: ChatGPT App Download Surge Makes It the Most Downloaded App Globally in March 2025

The sequence of events that precipitated the controversy unfolded swiftly. Talks between Anthropic and the Pentagon broke down on a Friday, after which President Donald Trump directed federal agencies to cease utilising Anthropic’s technology following a six-month wind-down period. Secretary of Defense Pete Hegseth went further, formally designating Anthropic as a supply-chain security risk, a striking escalation that effectively froze the company out of major government procurement. Within hours, OpenAI announced it had negotiated its own agreement, one that would permit its models to be deployed within classified government environments.

Competing Red Lines: Rhetoric Versus Reality

The swiftness of OpenAI’s announcement following the OpenAI DoD deal immediately prompted scrutiny. Both companies had publicly committed to identical ethical constraints, which prohibit the use of their models in fully autonomous weapons systems and in mass domestic surveillance operations. Interestingly, only one had managed to conclude a deal. The central question gnawing at critics, researchers, and consumers alike was whether OpenAI had quietly softened those commitments to secure the contract or whether its negotiating posture had simply been more accommodating from the outset.

Read Also: ChatGPT Mobile App Hits $3B Milestone

OpenAI moved to address the scepticism through a detailed blog post in which it identified three explicit prohibitions governing the use of its models under the agreement. It mentioned mass domestic surveillance, autonomous weapons systems, and what it described as “high-stakes automated decisions”, citing social credit-style scoring systems as a representative example. Furthermore, the company positioned its approach as more robust than that of competitors, arguing that other AI developers had “reduced or removed their safety guardrails and relied primarily on usage policies as their primary safeguards in national security deployments. ” OpenAI, by contrast, claimed its agreement relied on a “more expansive, multi-layered approach”, one that included retained discretion over its safety infrastructure, cloud-based deployment, the presence of cleared OpenAI personnel, and explicit contractual protections. In a pointed aside, the company added that it did not know why Anthropic had been unable to reach a comparable agreement and expressed hope that other laboratories would consider doing so.

OpenAI DoD Deal: The Executive Order 12333 Controversy

The blog post did not quell the controversy. Within hours of its publication, technology commentator Mike Masnick of Techdirt challenged OpenAI’s framing, arguing that the contract language, properly read, did in fact permit a form of domestic surveillance. His analysis centred on a reference within the agreement to Executive Order 12333, a longstanding directive that critics have long identified as a mechanism through which intelligence agencies, most notably the National Security Agency, conduct surveillance on communications transmitted through infrastructure located outside the United States borders, even when those communications involve American citizens. Given this anchoring data collection practices to that order rather than prohibiting them outright, Masnick contended, the agreement left a significant loophole intact.

Read Also: ChatGPT Mobile App Revenue Soars Beyond Competitors

OpenAI’s head of national security partnerships, Katrina Mulligan, pushed back forcefully. In a LinkedIn post, she dismissed what she characterised as a reductive reading of the contract, arguing that critics were operating under the flawed assumption that a single usage-policy clause in a single government contract represented the sole safeguard between Americans and the prospect of AI-enabled surveillance. “That’s not how any of this works,” Mulligan wrote, contending that deployment architecture, specifically, the restriction of OpenAI’s models to cloud-based API access, provided a structural barrier that prevented its systems from being embedded directly into weapons platforms, sensors, or other operational hardware. For Mulligan, the architecture of the deployment, not merely the language of the contract, was the operative safeguard.

OpenAI DoD Deal’s Market Backlash: Consumers Vote With Their Downloads

While the policy debate played out among executives, researchers, and legal commentators, an altogether different verdict was being rendered by consumers in reaction to the OpenAI DoD deal. According to data compiled by market intelligence firm Sensor Tower, uninstalls of ChatGPT’s mobile application in the United States surged by 295 per cent on a day-over-day basis on Saturday, February 28, a dramatic departure from the app’s typical daily uninstall rate of approximately nine per cent, as calculated over the preceding thirty days. The data mapped almost precisely onto the news cycle, indicating the spike coincided directly with the public disclosure of the OpenAI-DoD agreement.

Read Also: ChatGPT Growth Slows Amid Rising Competition

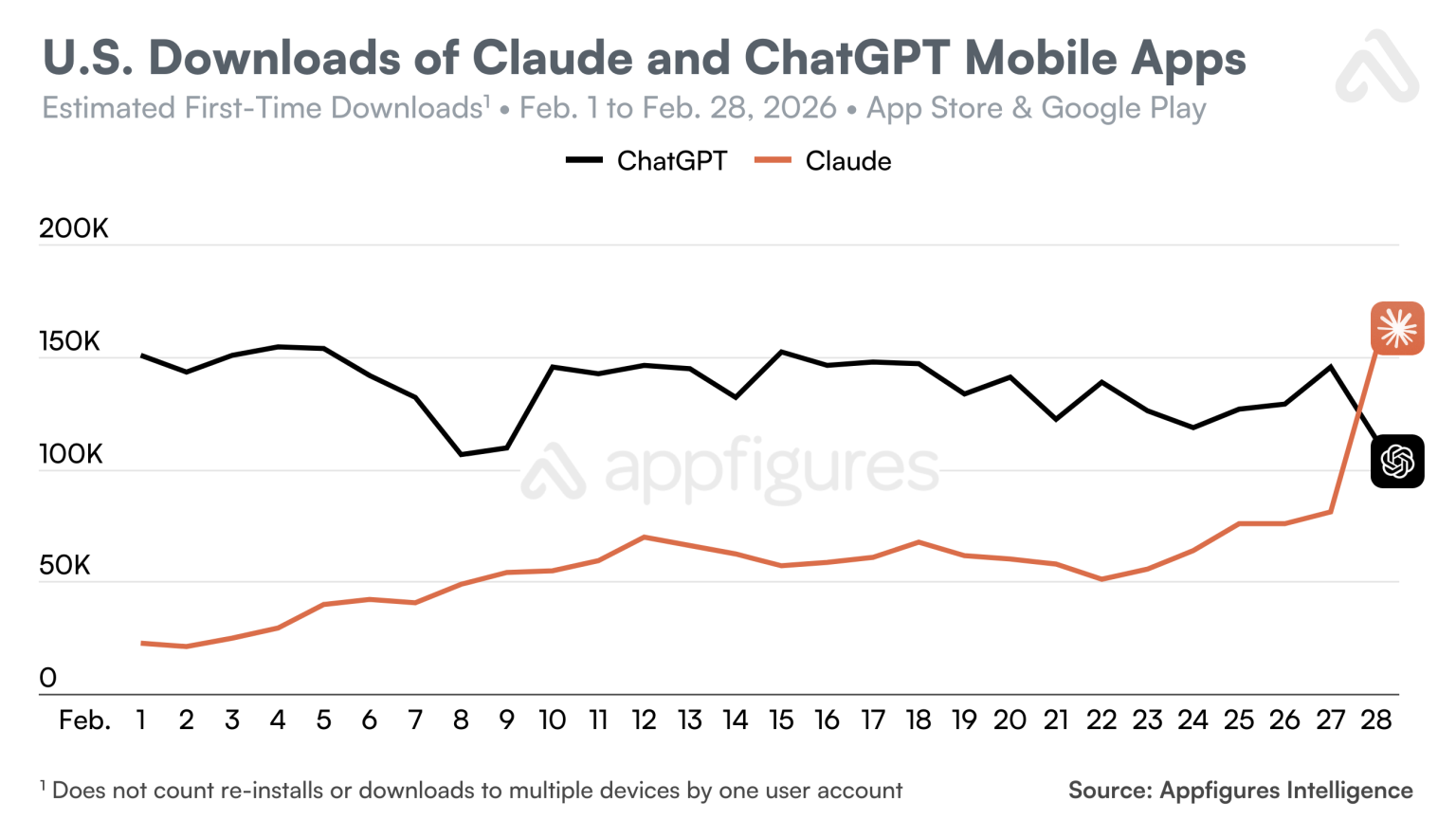

The same period produced a pronounced counter-movement in favour of Anthropic. U.S. downloads of Anthropic’s Claude application climbed 37 per cent day-over-day on Friday, February 27, the day Anthropic announced it would not proceed with a Pentagon partnership, and rose a further 51 per cent on Saturday, February 28. Competing data provider Appfigures placed that Saturday figure even higher, estimating an 88 per cent day-over-day increase. More strikingly, Appfigures reported that Claude’s total U.S. daily downloads on that Saturday exceeded those of ChatGPT for the first time in the applications’ respective histories, a threshold crossing that would have been difficult to anticipate even a week prior.

Claude Climbs the Charts as ChatGPT Ratings Collapse

The consumer reorientation registered across multiple metrics. ChatGPT’s U.S. download growth declined by 13 per cent on a day-over-day basis on the Saturday immediately following the OpenAI DoD deal’s announcement, continuing to fall on Sunday with a further five per cent drop. This downturn was rendered sharper by context, which was how the app had recorded 14 per cent day-over-day download growth on the Friday before the partnership became public knowledge, suggesting that the deal itself, rather than any underlying market dynamic, drove the reversal.

Claude, meanwhile, ascended to the top position in the United States App Store on Saturday, indicating a rise of more than 20 rankings compared with its standing approximately one week earlier, on February 22, 2026. As of Monday, March 2, it retained that position. Appfigures also noted that Claude had secured the number-one ranking among free iPhone applications in six countries beyond the United States, such as Belgium, Canada, Germany, Luxembourg, Norway, and Switzerland.

User sentiment expressed through formal ratings proved equally damaging for OpenAI. One-star reviews for ChatGPT surged 775 per cent on Saturday, according to Sensor Tower, then compounded further with a 100 per cent day-over-day increase on Sunday. Five-star ratings moved in the opposite direction, declining by 50 per cent over the same period. A third analytics firm, Similarweb, reported that Claude’s U.S. downloads over the preceding week had reached approximately twenty times their January volume, though the firm cautioned that factors beyond the political controversy may have contributed to that figure.

What the OpenAI DoD Deal Reveals About AI’s Political Moment

Altman, answering questions publicly on the social platform X, offered his own interpretation of the strategic calculus behind the agreement. The OpenAI DoD deal, he acknowledged, had generated substantial backlash severe enough that Anthropic’s Claude had briefly overtaken ChatGPT in Apple’s App Store rankings. His justification was framed in explicitly industry-wide terms. “We really wanted to de-escalate things, and we thought the deal on offer was good,” he said. If the agreement succeeded in reducing tensions between the Department of War, the Pentagon’s rebranded designation under the Trump administration, and the broader AI sector, he suggested, OpenAI would be credited with absorbing considerable reputational damage in service of a wider good. If it did not, the characterisation of the decision as rushed and careless would persist.

The episode ultimately illuminates a structural tension that has come to define the current moment in artificial intelligence governance. Companies operating at the frontier of AI capability face simultaneous pressure from government actors seeking operational access to powerful systems and from civil society constituencies demanding that those systems not be weaponised against democratic norms. OpenAI’s decision to proceed, and the consumer response that followed, suggest that, at least for now, a meaningful portion of the public is watching those choices closely and drawing its own conclusions.