When you purchase through links on our site, we may earn an affiliate commission. This doesn’t affect our editorial independence.

In 2022, OpenAI introduced ChatGPT, sparking a revolution in AI technology by making the chatbot available to all. Since then, Google has accelerated its AI development, launching models like Bard and later Gemini, designed to compete with and surpass existing AI capabilities. Gemini can process text, images, and even reasoning-based tasks, pushing AI beyond just language-based applications. However, despite these rapid advancements, the dream of truly autonomous and intelligent robotics remains largely unfulfilled.

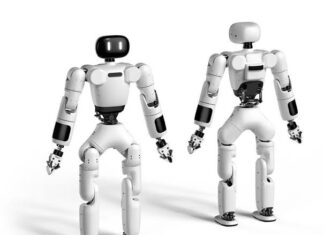

Well, the wait is almost over. Just three days ago, Google DeepMind officially launched their groundbreaking AI models—Gemini Robotics and Gemini Robotics-ER (Embodied Reasoning)—marking a significant advancement in integrating artificial intelligence with robotics. Fascinatingly, these models are specifically designed to enhance robots’ ability to understand and interact with the physical world. Moreover, they move beyond digital boundaries by enabling robots to perform complex real-world tasks.

Google’s DeepMind to Develop “World Models” for Artificial General Intelligence

Gemini Robotics

Built upon the capabilities of Gemini 2.0, Gemini Robotics introduces a vision-language-action (VLA) model that empowers robots with:

- Generality: The bot boasts the ability to adapt to novel situations, manage unfamiliar objects, comprehend diverse instructions, and operate in new environments without prior specific training.

- Interactivity: it has heightened responsiveness to natural language instructions and real-time environmental changes, facilitating seamless human-robot collaboration.

- Dexterity: it has improved fine motor skills, enabling the execution of intricate tasks such as folding origami or packing delicate items.

Gemini Robotics-ER

The Gemini Robotics-ER focuses on training other models with its embodied reasoning; it possesses enhanced visual and spatial understanding and can train other models to:

- Interpret and navigate complex physical spaces.

- Plan and execute tasks requiring intricate spatial awareness, such as efficiently packing objects into confined spaces.

This model integrates perception, state estimation, spatial reasoning, planning, and code generation, offering roboticists a comprehensive tool for developing more intelligent and responsive machines.

These innovations are built on several years of consistent research, starting with Google Brain, which initially pioneered deep learning integration across its products. Similarly, the Everyday Robots Project actively explored AI-driven robots for daily tasks. Ultimately, these initiatives laid the groundwork for the latest advancements, eventually culminating in the development of Gemini Robotics, which is now bringing AI closer to real-world applications.

The demonstrated videos for Gemini Robotics-ER featured Apollo, a robot from startup Apptronik, and Google DeepMind aimed to collaborate with Apptronik. Additionally, partnerships with trusted testers, including Agile Robots, Agility Robotics, Boston Dynamics, and Enchanted Tools, are underway to refine and expand the applications of these models.

The introduction of Gemini Robotics and Gemini Robotics-ER signifies a pivotal moment in robotics, bringing AI closer to seamlessly integrating into daily life and performing tasks with human-like understanding and precision.