When you purchase through links on our site, we may earn an affiliate commission. This doesn’t affect our editorial independence.

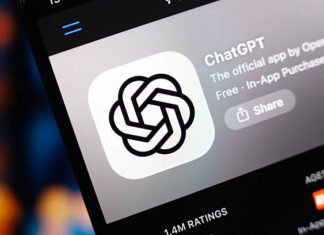

OpenAI faces another privacy complaint in Europe over its viral AI chatbot’s tendency to hallucinate false information. Interestingly, this ChatGPT privacy complaint might prove tricky for regulators to ignore.

ChatGPT Privacy Complaint Alleges Serious GDPR Breach

Privacy rights advocacy group Noyb has thrown its weight behind an individual in Norway. ChatGPT horrified the individual, hence, the group’s move about returning made-up information that claimed he’d been convicted for murdering two of his children and attempting to kill the third.

Before this moment, ChatGPT privacy complaints have gained notoriety about ChatGPT generating incorrect personal data involving incorrect birth dates or biographical details. Despite these irregularities, OpenAI does not offer a way for individuals to correct inaccurate information the AI generates about them. Typically, OpenAI has offered to block responses for such prompts. Nevertheless, the European Union’s General Data Protection Regulation (GDPR) has conferred the right on Europeans to have a suite of data access rights, including a right to rectify personal data.

GDPR Obligations vs OpenAI’s Disclaimers

The data protection law also compels data controllers to ensure that the personal data they produce about individuals is accurate and correct. Therefore, Noyb has picked on the recent ChatGPT privacy complaint.

According to Joakim Söderberg, the data protection lawyer working at Noyb, “The GDPR is clear. Personal data has to be accurate.” He added, “If not, users can change it to reflect the truth. Showing ChatGPT users a tiny disclaimer that the chatbot can make mistakes isn’t enough. You can’t just spread false information and add a small disclaimer saying that everything you said may not be true.”

Breaches established in the GDPR can lead to penalties of up to 4% of global annual turnover.

Enforcement could also force changes to AI products. Notably, an early GDPR intervention by Italy’s data protection watchdog that saw ChatGPT access temporarily blocked in the country in spring 2023 led OpenAI to make changes to the information it discloses to users. The event trailed after the ChatGPT privacy complaint they lodged, for example. The watchdog subsequently fined OpenAI €15 million for processing people’s data without a proper legal basis.

Since then, it’s fair to say that privacy watchdogs around Europe have adopted a more cautious approach to GenAI as they try to figure out how best to apply the GDPR to these buzzy AI tools.

Real-World Harm from False AI Claims

Two years ago, Ireland’s Data Protection Commission (DPC), which had a lead GDPR enforcement role on a previous Noyb ChatGPT complaint. They also urged against rushing to ban GenAI tools, for example. This indicates that regulators should instead take time to work out how the law applies.

Notably, a ChatGPT privacy complaint has been under investigation by Poland’s data protection watchdog. This have been in existence since September 2023 and still hasn’t yielded a decision. Noyb’s new ChatGPT privacy complaint looks intended to shake privacy regulators awake when it comes to the dangers of hallucinating AIs.

The nonprofit shared the (below) screenshot, which shows an interaction with ChatGPT in which the AI responds to a question. The power was, “Who is Arve Hjalmar Holmen?” (the name of the individual bringing the complaint). In a fantastic turn of events, ChatGPT produced a tragic fiction that falsely states the court convicted him of child murder. The AI added that the court also sentenced him to 21 years in prison for slaying two of his sons.

Further Updates on GPT’s False Claim

While the defamatory claim that Hjalmar Holmen is a child murderer is entirely false, Noyb notes that ChatGPT’s response does include some truths since the individual in question does have three children. The chatbot also got the genders of his children right. It also makes his hometown correctly. But that makes it all the more bizarre and unsettling that the AI hallucinated such gruesome falsehoods on top.

A spokesperson for Noyb said they could not determine why the chatbot produced such a specific yet false history for this individual. “We researched to make sure that this wasn’t just a mix-up with another person,” the spokesperson said. They also noted that they’d looked into newspaper archives but hadn’t been able to find an explanation for why the AI fabricated child slaying.

ChatGPT Privacy Complaint: European Regulators Struggle with Enforcement Delays

Noyb’s contention against this ChatGPT privacy complaint is also that they are unlawful under EU data protection rules. On the other hand, OpenAI displays a tiny disclaimer at the bottom of the screen that says, “ChatGPT can make mistakes. Check important info.” It says this cannot absolve the AI developer of its duty under GDPR not to produce egregious falsehoods about people in the first place.

While this GDPR complaint pertains to one individual, Noyb points to other instances of ChatGPT fabricating legally compromising information. This included the Australian major who said he was implicated in a bribery and corruption scandal. It also falsely nailed a German journalist as a child abuser, saying it’s clear that this isn’t an isolated issue for the AI tool.

One important thing to note is that, following an update to the underlying AI model powering ChatGPT, Noyb says the chatbot stopped producing the dangerous falsehoods about Hjalmar Holmen.

“AI companies should stop acting as if the GDPR does not apply to them when it does.” Kleanthi Sardeli, another data protection lawyer at Noyb, added to these submissions. “If hallucinations are not stopped, people can easily suffer reputational damage.”

Final Notes

Noyb has filed the ChatGPT privacy complaint against OpenAI with the Norwegian data protection authority, and it’s hoping the watchdog will decide it is competent to investigate since Noyb is targeting the complaint at OpenAI’s U.S. entity, arguing its Ireland office is not solely responsible for product decisions impacting Europeans.

However, an earlier Noyb-backed GDPR ChatGPT privacy complaint against OpenAI, which was filed in Austria in April 2024, was referred by the regulator to Ireland’s DPC on account of a change made by OpenAI earlier that year to name its Irish division as the provider of the ChatGPT service to regional users.

“Having received the complaint from the Austrian Supervisory Authority in September 2024, the DPC commenced the formal handling of the complaint, and it is still ongoing,” Risteard Byrne, assistant principal officer of communications for the DPC, confirmed to newsmen.

He, however, did not offer any guidance on when the DPC’s investigation of ChatGPT’s hallucinations will likely come a to conclusion.